A creator sits in a dimly lit room in Seoul, staring at a screen where a version of herself—one she never filmed—is selling skincare products she has never used. Her voice is perfect. The tilt of her head is uncanny. Every micro-expression is a carbon copy of her own soul, harvested from a hundred different vlogs and processed through a machine that doesn't know her name.

This is the frontier. It is a place of breathtaking wonder and absolute terror.

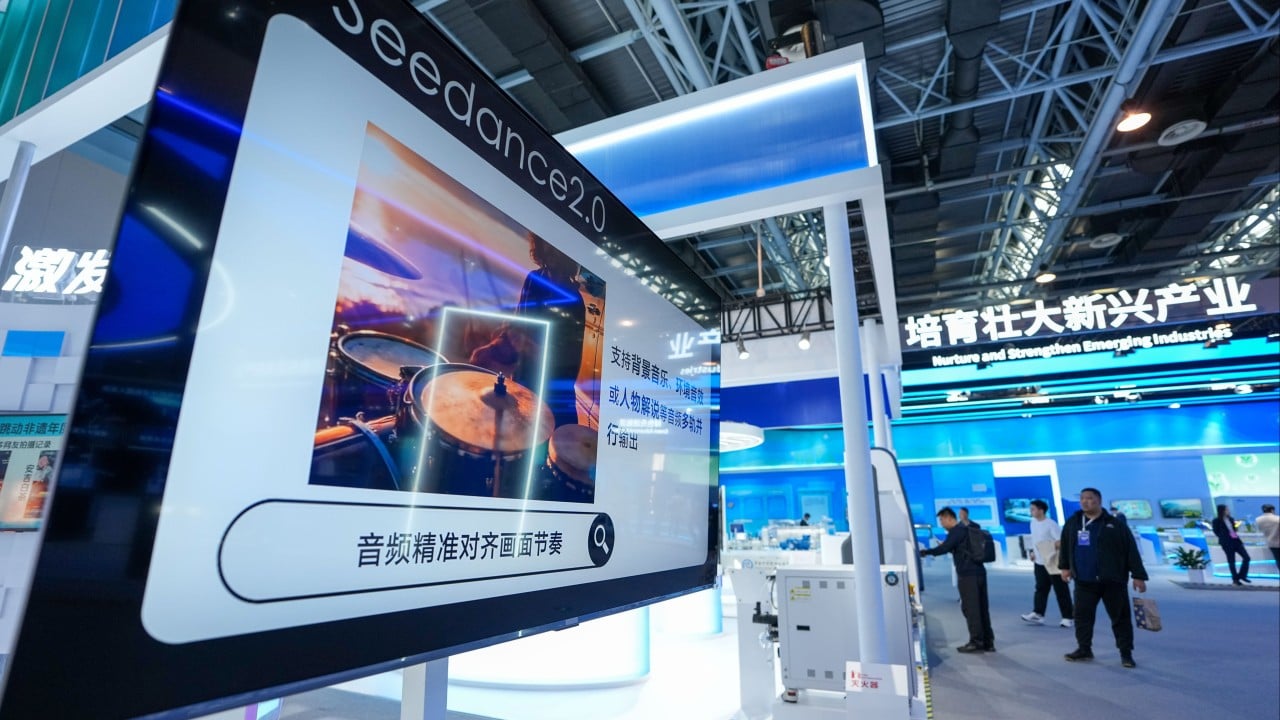

When ByteDance recently upgraded its generative AI video suite, Seedance 2.0, the headlines focused on the technical specifications and the impending global roll-out. They talked about efficiency. They talked about market share. But they missed the heartbeat of the story: the desperate, high-stakes scramble to build a fence around human identity before the machines finish blurring the lines entirely.

The Ghost in the Machine

Seedance 2.0 isn't just another software update. It is a massive leap in the ability to conjure reality out of thin air. For a small business owner in Ohio, this means they can create a professional-grade commercial without a camera crew, a lighting director, or a six-figure budget. They type a few sentences, and the pixels dance into a cinematic masterpiece.

But there is a shadow attached to that light.

As these tools move from experimental toys to the engines of global commerce, we are facing a crisis of trust. If anyone can make a video of anyone saying anything, the currency of the visual world—our "seeing is believing" instinct—devalues to zero. ByteDance knows this. They aren't just shipping a video generator; they are shipping a digital notary.

The core of the Seedance 2.0 update is a sophisticated invisible watermarking system. Think of it as a microscopic, indelible tattoo etched into every frame of video. You can't see it with the naked eye. You can't crop it out. You can't filter it away. It is a permanent confession by the file itself, admitting, "I was made by an AI."

This isn't about vanity. It is about survival in an era where the deepfake is becoming the default.

The Invisible Shield

Imagine a photographer who spends ten years mastering the way light hits a mountain at dawn. They understand the texture of the snow and the specific, fleeting blue of the shadows. Then, an algorithm swallows their entire portfolio and begins spitting out "original" mountain scenes that look exactly like their life's work.

The photographer hasn't just lost a sale. They've lost their thumbprint on the world.

ByteDance’s new IP safeguards are an attempt to address this visceral sting. Seedance 2.0 introduces stricter controls over how intellectual property is used and recognized within the generative process. They are trying to build a system that respects the "who" behind the "what."

The technical challenge is immense. To protect IP, the system has to recognize it first. It has to distinguish between a generic sunset and a sunset captured with a specific, proprietary artistic style. It’s a game of cat and mouse played at the speed of light. ByteDance is betting that by baking these safeguards into the foundation of Seedance, they can convince wary global regulators and skeptical creators that their platform isn't a pirate ship, but a protected harbor.

The Weight of a Watermark

The global roll-out of these tools is a logistical mountain, but the psychological shift is even steeper. In many ways, ByteDance is responding to a growing global anxiety. Governments from Brussels to Washington are breathing down the necks of AI developers, demanding transparency. They want to know where the data came from and how we will know it when we see it.

The watermark is the answer to a question we are all starting to ask: "Is this real?"

But "real" is a slippery word. If a video of a sunset is indistinguishable from a real sunset, does the watermark make it less beautiful? If a virtual influencer provides genuine comfort to a lonely teenager, does the "AI-generated" tag make that connection a lie?

These are the questions that engineers can't code away.

ByteDance's strategy is to lead with the shield. By bolstering Seedance 2.0 with these protections before the global launch, they are trying to set the standard for what "responsible" AI looks like. They are acknowledging that the power to create is also the power to deceive, and that the only way to keep the world from descending into a hall of mirrors is to provide a map.

The Cost of Entry

There is a quiet tension in the rooms where these decisions are made. On one hand, you have the drive for "limitless" creativity—the dream of a world where anyone can manifest their imagination instantly. On the other, you have the sobering reality of copyright law, personal rights, and the potential for mass disinformation.

The safeguards in Seedance 2.0 are a compromise. They add friction. They add oversight. They might even slow down the "magic" just a little bit.

But friction is what keeps us from sliding off the edge.

Consider the hypothetical case of a small animation studio in France. They have a signature character, a whimsical fox that has become a national icon. Without IP safeguards, Seedance 2.0 could be used by a competitor to generate a thousand videos of that fox doing things the original creators would never approve of. The studio would be powerless. Their brand would be diluted into noise.

The new safeguards are meant to give that studio a "kill switch" or at least a way to claim their territory. It is an acknowledgment that in the digital age, data is the new land, and we are currently in the middle of a massive, chaotic land grab.

The Human Core

Behind the lines of code and the corporate press releases, there are people trying to figure out how to be human in a world that is becoming increasingly synthetic. We want the convenience of AI. We want the speed. We want the ability to create without the technical hurdles.

But we don't want to lose ourselves.

The watermarking in Seedance 2.0 is a small, digital white flag. It is a sign of surrender to the fact that we need boundaries. We need to know where the human ends and the machine begins. Even if the machine is brilliant. Even if the machine is perfect.

The global roll-out will be the true test. As Seedance moves into different cultures with different laws and different definitions of "truth," these safeguards will be pushed to their breaking point. There will be hacks. There will be workarounds. There will be moments where the system fails.

But the effort itself tells us something important. It tells us that even the giants of the AI world recognize that a world without trust is a world where nothing—not even the most beautiful AI-generated video—has any value.

We are entering an era where the most important thing a creator can own is their authenticity. Not their skill, not their equipment, and not their budget. Just the simple, verifiable fact that they were there. That they felt it. That they made it.

The watermark isn't just a technical requirement. It is a desperate attempt to save the meaning of the word "original" before it vanishes forever.

The screen in Seoul flickers. The creator watches her digital twin and reaches out to touch the glass. The twin mimics the movement, a perfect reflection of a person who isn't actually there. Somewhere deep in the metadata, a tiny string of code says this isn't real.

For now, that has to be enough.