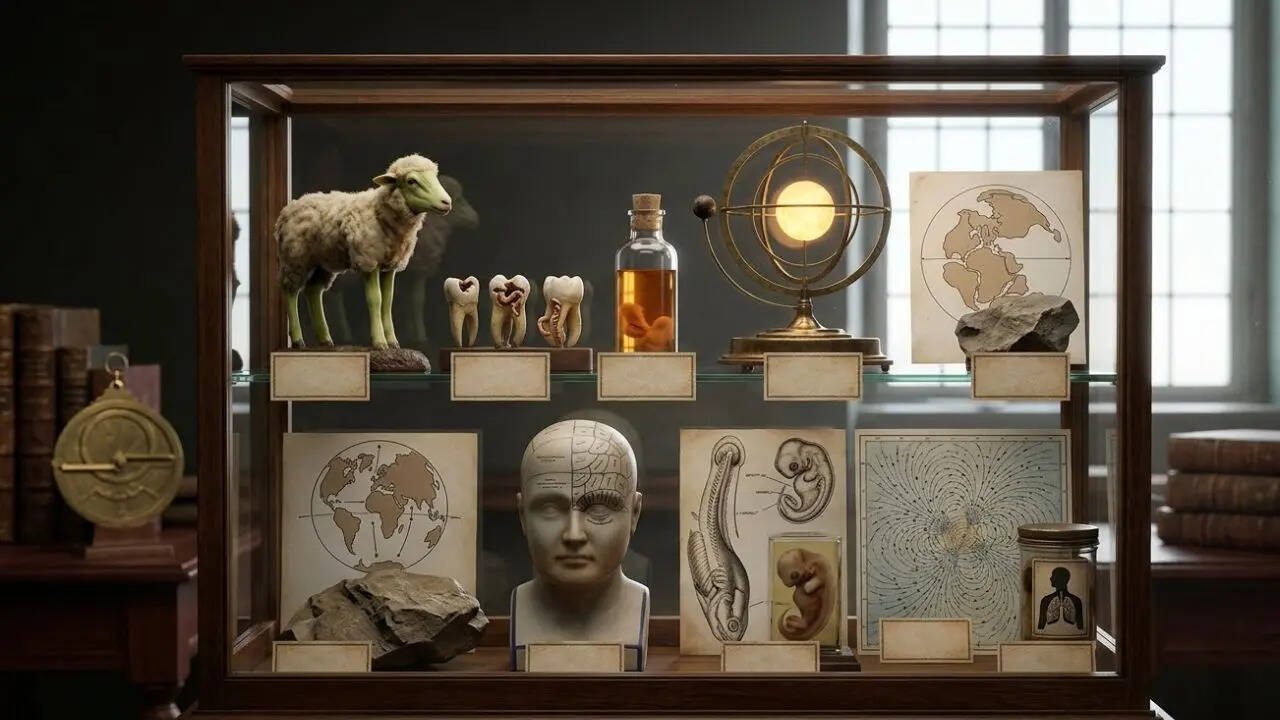

The progression of scientific knowledge is not a linear accumulation of facts but a series of structural displacements. When a dominant theory is discarded, it is rarely because it was "wrong" in a vacuum; rather, it became mathematically or observationally incompatible with new data density. To understand why theories like Phlogiston or Miasma persisted, one must analyze the Epistemic Utility they provided at the time. These frameworks offered predictive power and a logical scaffolding for the available evidence, however incomplete. By deconstructing these obsolete models through the lens of modern thermodynamics, biology, and physics, we can identify the specific logical inflection points where they failed.

The Thermodynamic Debt of Phlogiston Theory

In the 18th century, the Phlogiston theory served as the primary framework for understanding combustion and oxidation. The core postulate was that all combustible objects contained "phlogiston," a colorless, odorless, tasteless, and weightless substance that was released during burning. Recently making news in this space: The Polymer Entropy Crisis Systems Analysis of the Global Plastic Lifecycle.

The logic followed a simple subtractive equation:

Fuel = Calx (Ash) + Phlogiston (Released to air)

The failure of this model occurred during the transition from qualitative observation to quantitative measurement. When chemists like Antoine Lavoisier began weighing substances before and after combustion, they discovered that metals actually gained weight when burned. To preserve the theory, proponents argued that phlogiston possessed "negative gravity." More details on this are detailed by Ars Technica.

The collapse of Phlogiston was inevitable once the Law of Conservation of Mass was formalized. The model lacked a mechanism to account for the absorption of atmospheric oxygen. Phlogiston was an attempt to explain an exothermic reaction without understanding the role of the oxidizing agent. It failed because it treated heat and chemical potential as a material substance rather than a state change or an exchange of energy.

Miasmatic Transmission and the Infrastructure of Error

Before the validation of Germ Theory in the late 19th century, the medical establishment operated under the Miasma Theory. This framework suggested that diseases like cholera, chlamydia, and the Black Death were caused by "bad air" or noxious exhalations from decomposing organic matter.

Miasma theory was not entirely devoid of empirical utility. Because it prioritized the removal of filth and the improvement of ventilation, it inadvertently led to better sanitation. However, the cause-and-effect mapping was flawed:

- The Vector Blindness: By focusing on smell (the symptom of decay), practitioners ignored the true vectors—microorganisms in water and food.

- The Correlation Trap: Proximity to sewage correlated with disease, but the causal mechanism was biological, not atmospheric.

The structural shift occurred when John Snow mapped the 1854 Broad Street cholera outbreak. His data-driven approach bypassed the atmospheric hypothesis by isolating a single water pump as the source. This signaled the transition from Symptomatic Observation (it smells bad) to Microbial Logic (specific pathogens require specific interventions).

The Luminiferous Aether and the Medium Fallacy

Nineteenth-century physicists faced a conceptual bottleneck: if light is a wave, what is the medium through which it travels? In every other observed phenomenon, waves required a physical substrate (sound through air, ripples through water). They hypothesized the "Luminiferous Aether," a weightless, frictionless, and invisible substance that permeated the entire universe.

The Aether was a logical necessity of Newtonian mechanics. It required extreme physical properties: it had to be rigid enough to support high-frequency light waves, yet fluid enough to allow planets to pass through it without drag.

The Michelson-Morley experiment in 1887 was designed to detect the "aether wind" by measuring the speed of light in perpendicular directions. The result was a null hypothesis. The speed of light remained constant regardless of the Earth's motion. This undermined the entire foundation of classical wave mechanics. The Aether was eventually rendered obsolete by Einstein’s Special Relativity, which discarded the need for a medium by defining light as an electromagnetic field that propagates through the vacuum of space-time.

The Humoral Imbalance and Biological Determinism

For over two millennia, Western medicine was governed by Humorism. This system classified human health based on the balance of four distinct bodily fluids: blood, phlegm, black bile, and yellow bile.

The model was built on a Macro-Micro Correspondence. It linked the fluids to the four seasons, the four elements, and four temperaments (sanguine, phlegmatic, melancholic, and choleric). From a strategic standpoint, Humorism was a closed-loop system; any ailment could be categorized and treated by restoring balance through bloodletting or dietary changes.

The failure of Humorism was its lack of Granular Specificity. It attempted to explain all pathology through a single, holistic variable (balance). As anatomy and cellular biology evolved, it became clear that health was the result of highly specific chemical signals, endocrine functions, and cellular interactions. The "balance" was not between four fluids, but between thousands of complex, interlocking systems that Humorism was too blunt an instrument to measure.

Preformationism and the Scaling Paradox

In the early days of microscopy, many biologists believed in Preformationism—the idea that organisms develop from miniature versions of themselves. Some "ovists" believed the tiny human (homunculus) resided in the egg, while "spermists" believed it was in the sperm.

This theory avoided the difficult question of how complex structures could emerge from formless matter (epigenesis). However, it created an Infinite Regress Paradox. If every human contained a miniature human, and that miniature human contained another, then all future generations must have existed, nested like Russian dolls, inside the first human.

This model collapsed under the weight of its own scaling requirements. It could not survive the discovery of DNA and the understanding of genetic coding. Preformationism was a "hardware" solution to a "software" problem; it assumed that form required a pre-existing physical template rather than a set of instructions that direct the assembly of matter over time.

Spontaneous Generation and the Entropy Gap

The belief that complex life could arise from non-living matter—maggots from rotting meat, mice from piles of grain—persisted because of a failure to observe Closed-System Variables. Scientists lacked the tools to see the microscopic eggs or spores that initiated these life cycles.

Louis Pasteur's swan-neck flask experiment provided the definitive data point. By creating a vessel that allowed air to enter but trapped dust and microbes in a curve of the glass, he proved that no life would grow in a sterile environment. This shifted biology from a model of Spontaneous Emergence to Biogenesis (life only comes from life).

The persistence of spontaneous generation was a result of observing the end state of a biological process without seeing the input. It was a failure of resolution, where the macro-scale result was attributed to a magical property of the substrate rather than an external biological contaminant.

The Expanding Earth and Tectonic Displacement

Before the mid-20th century acceptance of Plate Tectonics, some geologists proposed the Expanding Earth theory. To explain why the continents seemed to fit together like puzzle pieces, they suggested that the Earth’s radius was increasing, pushing the landmasses apart.

This theory attempted to solve the Fit Problem without needing a mechanism for subduction (the process of one plate sliding under another). However, it faced an insurmountable Mass-Energy Constraint. There was no known physical process that could create the massive amount of matter required to increase the Earth’s volume without significantly altering its gravitational pull.

The discovery of mid-ocean ridges and subduction zones provided a "zero-sum" alternative: the crust is constantly being created in some areas and destroyed in others. This model accounted for the movement of continents without violating the laws of physics regarding the conservation of mass.

The Neural Transfer of Memory and the Flatworm Error

In the 1950s and 60s, experiments on planarian (flatworms) led to the "memory transfer" hypothesis. Researchers claimed that if they trained a flatworm to navigate a maze and then fed its ground-up remains to another worm, the second worm would "inherit" the memory.

This led to a surge of interest in Chemical Mnemonics, the idea that memories were stored in RNA molecules that could be physically transferred between organisms. This was an elegant, if gruesome, explanation for learning.

However, the experiments were notoriously difficult to replicate. The "learning" observed in the second group of worms was eventually attributed to non-specific sensitization rather than the transfer of discrete data. Modern neuroscience has since moved to the Synaptic Plasticity model, where memory is stored in the strength and configuration of connections between neurons (the "connectome") rather than in a harvestable chemical substance.

The Fixed-State Universe and the Static Bias

The Steady State Theory suggested that the universe has no beginning and no end. As it expands, new matter is continuously created to maintain a constant density. This was the primary rival to the Big Bang Theory because it avoided the "singularity" problem—the moment where the laws of physics break down.

The Steady State model failed because it could not account for the Cosmic Microwave Background (CMB). If the universe had always existed in a steady state, we would not see the uniform "afterglow" of a massive expansion event. The discovery of the CMB in 1964 provided the empirical "smoking gun" that the universe had a definitive, high-energy origin point.

The Static Bias was a psychological preference for a universe that was eternal and unchanging, which clouded the interpretation of early redshift data indicating that everything was moving away from everything else.

The Structural Playbook for Modern Theory Assessment

To avoid the pitfalls of these historical errors, we must apply a rigorous filter to current scientific models. When evaluating a theory, prioritize the following criteria:

- Mechanism over Correlation: Does the theory explain how the change occurs at a granular level, or does it merely note that two things happen at the same time?

- Dimensional Compatibility: Does the theory violate established laws in adjacent fields? (e.g., Does a biological theory violate the Second Law of Thermodynamics?)

- Predictive Precision: Can the model predict a specific, measurable outcome that no other model can explain?

- Parsimony (Occam’s Razor): Does the theory require the invention of new, undetectable substances (like Aether or Phlogiston) to remain viable?

The strategic move is to treat every current consensus as a High-Probability Hypothesis rather than an absolute truth. In fields like quantum gravity or dark matter, we are likely currently using "Aether-equivalent" placeholders—concepts that allow our math to work today but will be discarded when a more granular, data-dense framework arrives.

Would you like me to perform a similar structural audit on current fringe theories in quantum physics or modern nutritional science?